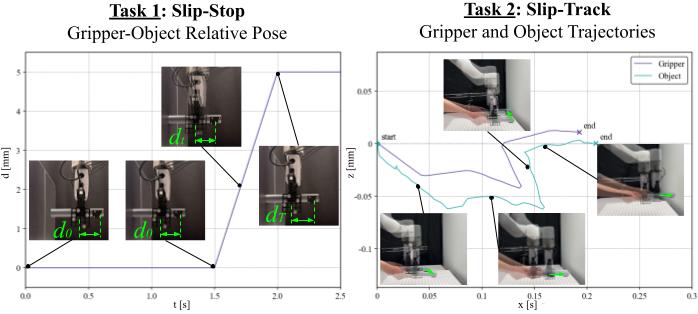

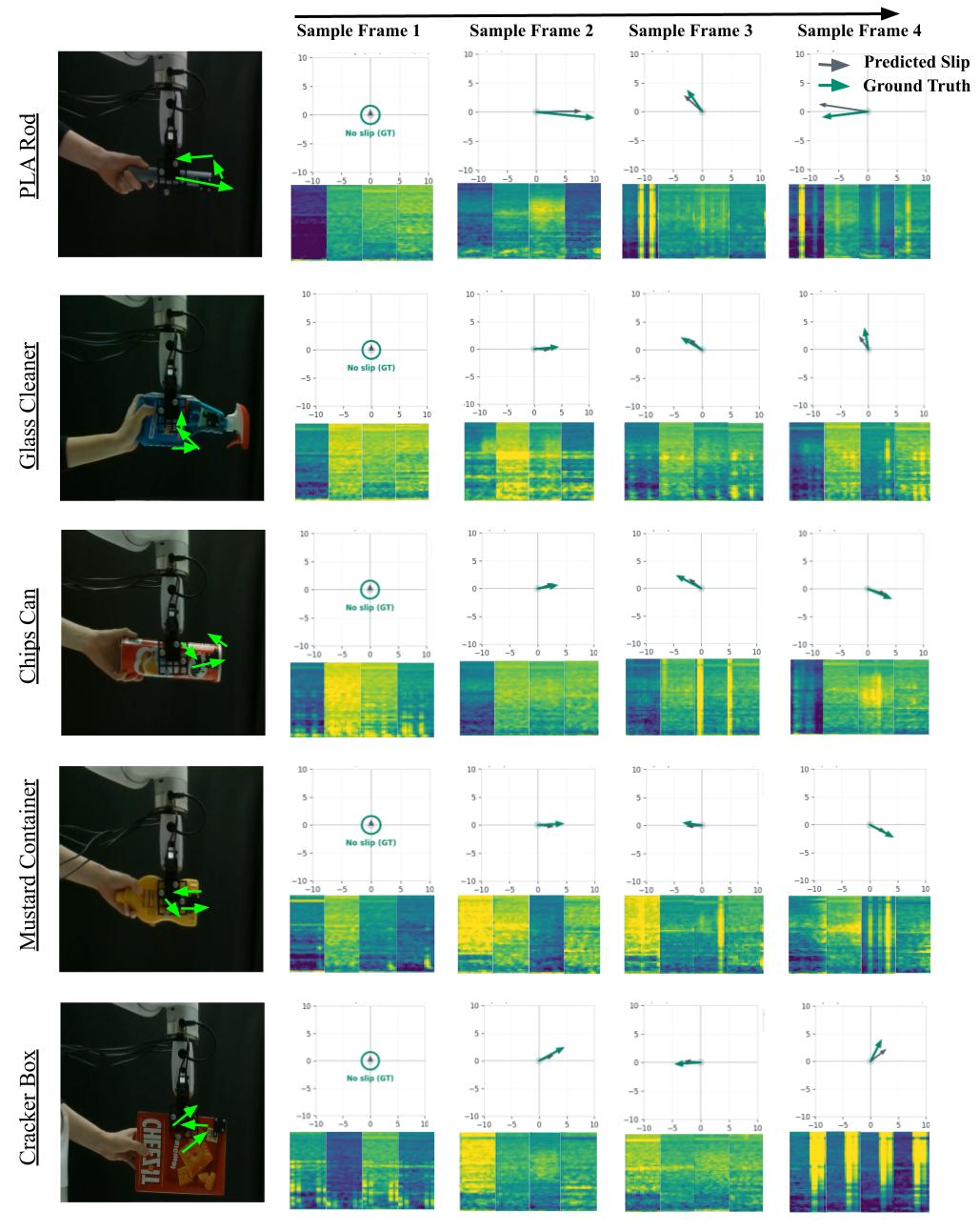

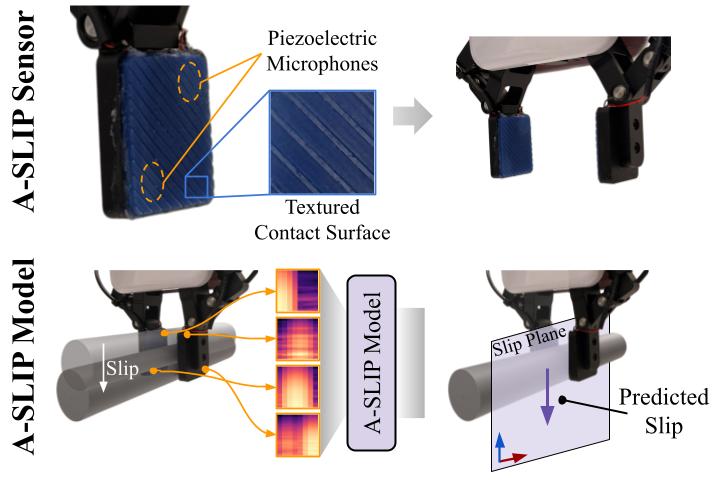

System Overview

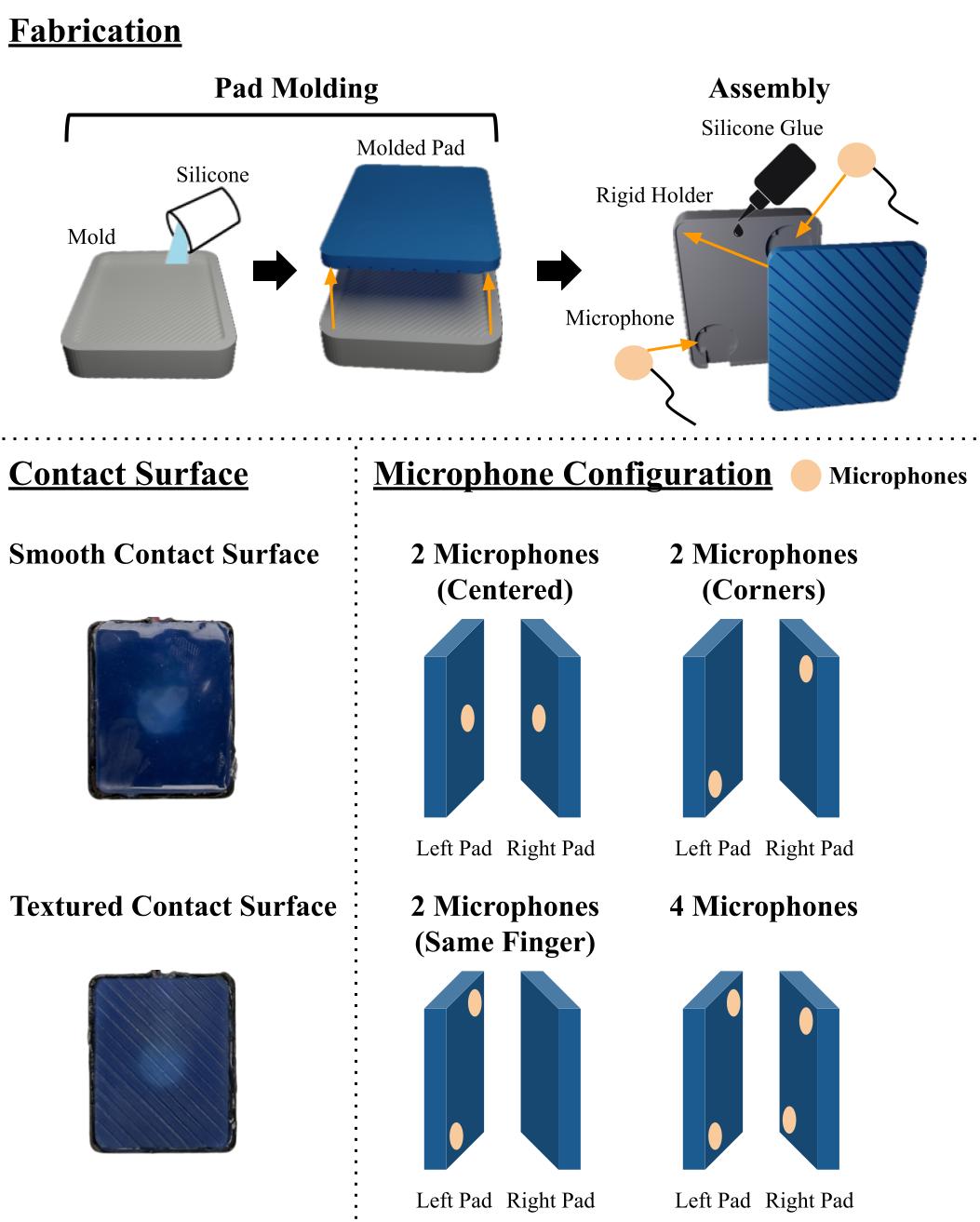

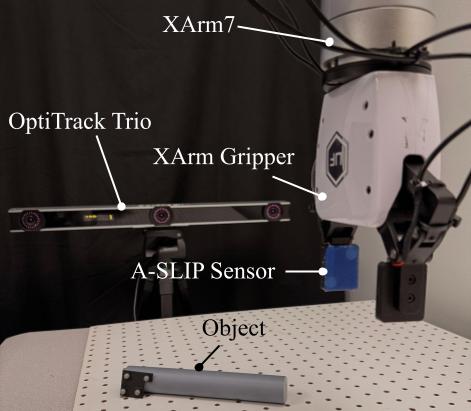

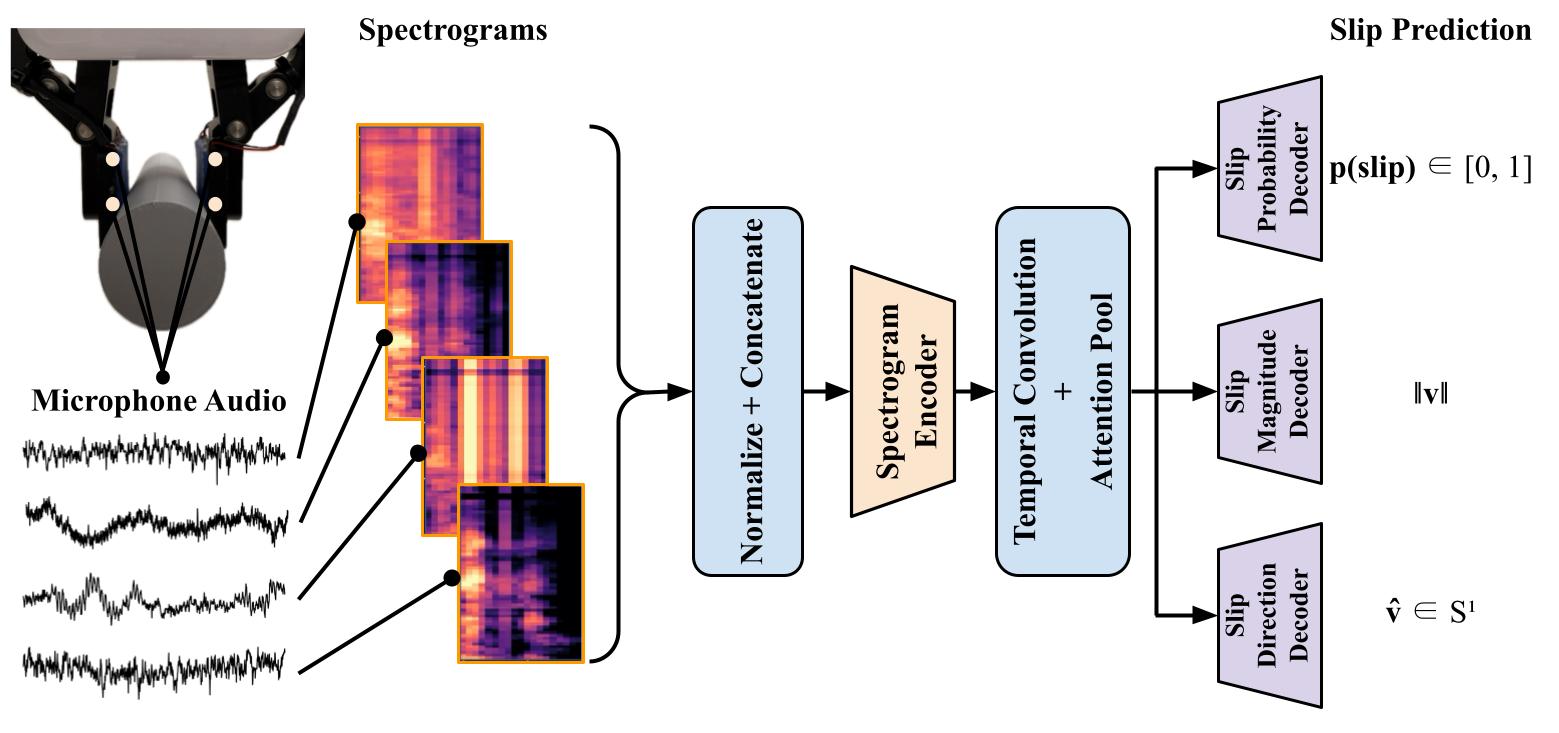

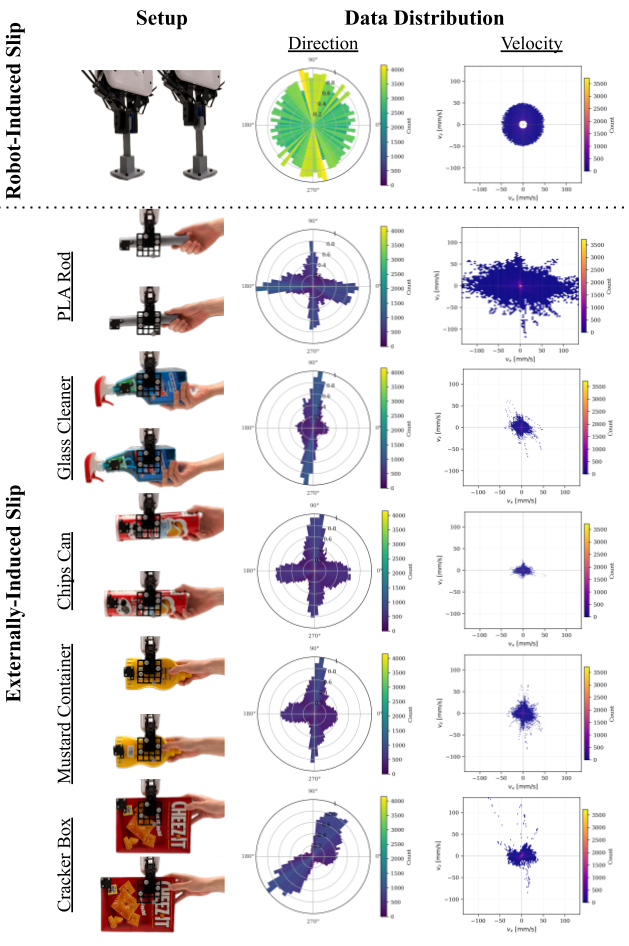

A-SLIP is a complete system for real-time slip estimation in robotic grasping. The system integrates piezoelectric microphones into a parallel-jaw gripper and uses a convolutional neural network to process synchronized multi-channel audio spectrograms, jointly estimating slip presence, direction, and magnitude in the grasp plane.